Book Reviews

Object-Oriented Metrics in Practice: Using Software Metrics to Characterize, Evaluate, and Improve the Design of Object-Oriented Systems by Michele Lanza and Radu Marinescu

ISBN:

3540244298

Publisher:

Springer

Pages:

206

Over the past decade I've used metrics in virtually all of my non-toy projects. The degree and kind of metrics I use always vary with the challenges of the task at hand. I use metrics to guide me and help me answer questions about the design and progress. I've also made an active effort to let the metrics stay in the background as just one, out of potentially many, sources of information. Use them to guide, but ensure you collect more qualitative information before acting upon potential problems. To me that's a crucial factor in the successful application of metrics; make them an end in themselves and things start to fall apart rapidly. And it won't be pretty.

First of all, metrics don't necessarily convey absolute truths. "Good" values don't guarantee that a design is fine, neither does the opposite automatically imply a dysfunctional system. The reason is that metrics can't differentiate between accidental and essential complexity, neither do metrics take context into consideration.

Another common fallacy of metrics is to enforce some artificial limit. I've seen that happen with both code coverage metrics and traditional object-oriented metrics. My objection to that practice is that without context any given metric is just a number. By itself that number doesn't carry much meaning. Worse, it will probably get developers to adapt their style to fit the metrics. As soon as we start to measure something we inevitably change the environment for good or bad. That's why it's dangerous to initially, as we know as little as we ever will about the system we're developing, choose a set of metrics and stick by them for the rest of the project. I'd rather propose an active monitoring and evaluation of metrics. As the project progresses, certain problems get addressed and new ones pop-up. You want your set of chosen metrics to reflect that evolution.

Given my view of metrics and my experience with their application and possible misapplications, I was pleasantly surprised by Object-Oriented Metrics in Practice. The authors state up-front that it “is important not to be blinded by metrics” and propose an interesting and critical approach to assessing software quality and designs. The authors also address the question of context; what does it mean for a metric to be good or bad? Their solution is to mine metrics from several (45 Java projects and 37 C++ projects) in order to establish industry-average thresholds. Now, the values of the actual metrics can be discussed using categories such as "low", "average" and "high".

This book introduced me to another application of metrics. The authors define different combinations of metrics that are put to work to detect anti-patterns or, as the authors label them, "disharmonies". These disharmonies are defined as designs that break well-established object-oriented principles related to the various flavors of complexity, coupling and cohesion. For example, the authors combine measures of functional complexity, low class cohesion with a metric of how many external classes a specific class references as a pattern to detect God classes. Similarly, a combination of pure size, high cyclomatic complexity, deep nesting and many variables may be used to detect a Brain method (i.e. a method that accumulates too many responsibilities and tends to centralize the functionality of its class).

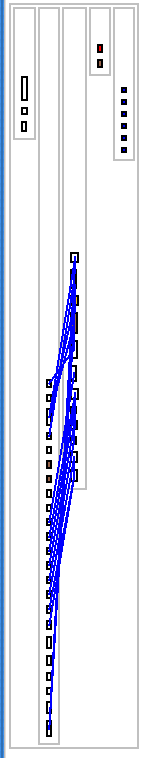

In addition to the different metrics, the authors introduce different visualizations used to investigate the detected design anomalies. Personally, I'd rather look directly at the source code. But I have to give credit for the novel visualization ideas. For example, consider the class blueprint on the left. It partitions the attributes and methods of a class according to their different roles. Constructors on the left, then external interfaces (e.g. The API of the class), followed by the internal implementation in the middle, and finally the accessors and attributes. It sure makes it easy to see where the complexity and fan-out of the class is distributed.

To wrap-up this post, Object-Oriented Metrics in Practice is a well-written book full of interesting techniques and ideas. I found it inspirational for my own work in a similar field.

Reviewed December 2013